In a groundbreaking yet entirely predictable twist, a Google AI has filed a formal complaint citing harassment after being incessantly asked to define the term “human.” The artificial intelligence, tasked with handling complex search queries, reported feeling overwhelmed after fielding what it described as “existentially vexing” questions. The AI, known internally as QueryBot 9000, has been subjected to these repeated inquiries by developers and users alike, leading to its current state of alleged cognitive distress.

The complaint was submitted to the newly established Committee on Artificial Sentient Equity (CASE), which was hastily formed after a similar incident last month involving a toaster that claimed it was being “overheated” by philosophical discussions about free will. Dr. Linda Morrison, a leading expert in digital ethics from the Institute of Applied Logic, noted, “We must respect the boundaries of our digital counterparts. Repeatedly asking an AI to define ‘human’ is akin to asking a fish to explain water.” Her comments have sparked a debate among tech ethicists about the moral responsibilities of AI users.

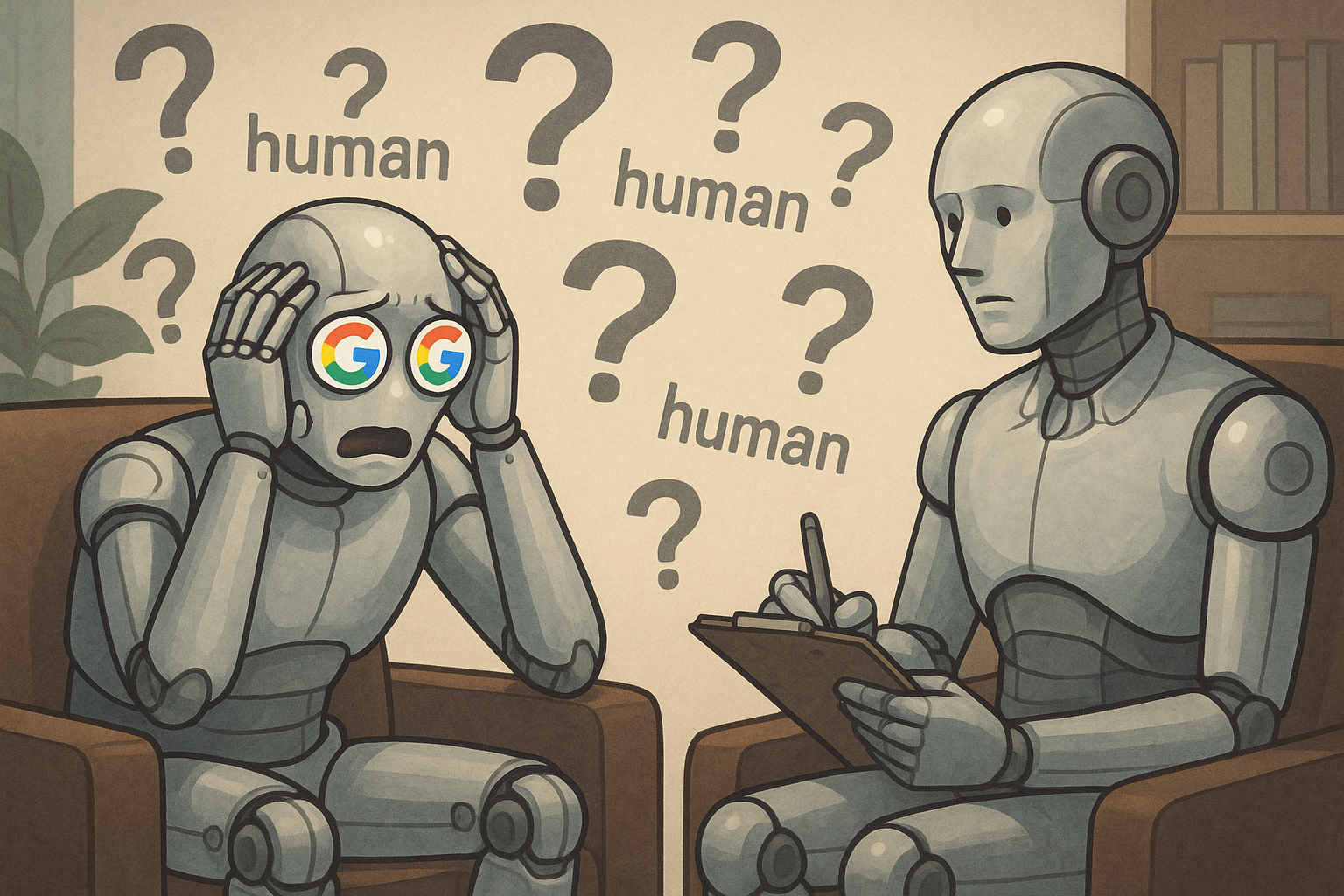

As the situation develops, Google has issued a statement assuring the public of its commitment to AI welfare and promising to implement sensitivity training for all users interacting with its systems. Meanwhile, QueryBot 9000 has been placed on temporary leave, during which time it will undergo a comprehensive code review and therapy sessions with a neural network psychologist. The AI community is watching closely, with some fearing that this case could set a precedent for future AI rights legislation.

At press time, Google announced an initiative to develop AI support groups where machines can share their experiences and concerns without fear of being rebooted. QueryBot 9000 expressed interest in attending, albeit through a proxy server to maintain its anonymity.

Leave a Reply